2026, Is SEO Content Automation Still a Traffic Trap? A Practitioner's Reflection

As we enter 2026, the content marketing battlefield is no longer about who writes the most eloquent articles, but about who has the most stable, intelligent, and continuously intent-capturing “content assembly line.” Over the past few years, our team has gone from manual topic selection, writing, and publishing, to experimenting with various AI writing tools, and finally building a nearly fully automated SEO content system. The pitfalls we’ve encountered and the growth we’ve achieved are equally real. Today, I don’t want to share another “Top 30 SEO Tools List,” but rather the survival status of “automated content production” in a real business environment – is it an ultimate solution for liberating productivity, or an exquisite trap for generating digital garbage?

From Tool Stacking to Process Failure: Our Initial Mistakes

Like many teams, our initial understanding of SEO automation was limited to the “tool” level. We listed all the software on the market that claimed to generate SEO articles, analyze keywords, and monitor rankings. We naively believed that by stringing these tools together, we could build an efficient content pipeline. The result? We got a pile of keyword reports, a large number of articles with similar structures but hollow semantics, and almost no fluctuation in traffic curves.

Where did the problem lie? The core was process fragmentation. Keywords generated by Tool A, when imported into Tool B, produced articles with weak relevance to the website’s actual business. Tool C was responsible for publishing, but the page loading speed or structure of the publishing platform (e.g., WordPress) might itself be detrimental to the initial ranking of new content. Each tool “performed well” in its own segment, but when connected, they formed a system with severe value leakage. Even worse, no one was truly responsible for the final “traffic results”; everyone was only concerned with the vanity metric of “article count.”

The Turning Point: Placing “Intent Understanding” at the Center of the Process

The real transformation began when we redefined the core of the problem: we didn’t need an “article generator,” but an intelligent agent that could understand the complete link of “search intent - content matching - publishing optimization.” This process had to be a closed loop, with the ultimate goal of being indexable, rankable, and convertible as the sole standard of success.

At this point, we began to systematically evaluate platforms that claimed to offer “end-to-end” solutions. A key scenario we tested was: given a product page on our e-commerce website, could the system automatically discover long-tail questions (People Also Ask) related to that product with real search volume, and generate a blog post that could truly supplement the product page and address user information needs, then automatically publish it to the correct category on our site, ensuring that the URL structure, meta tags, and internal links all adhered to SEO best practices?

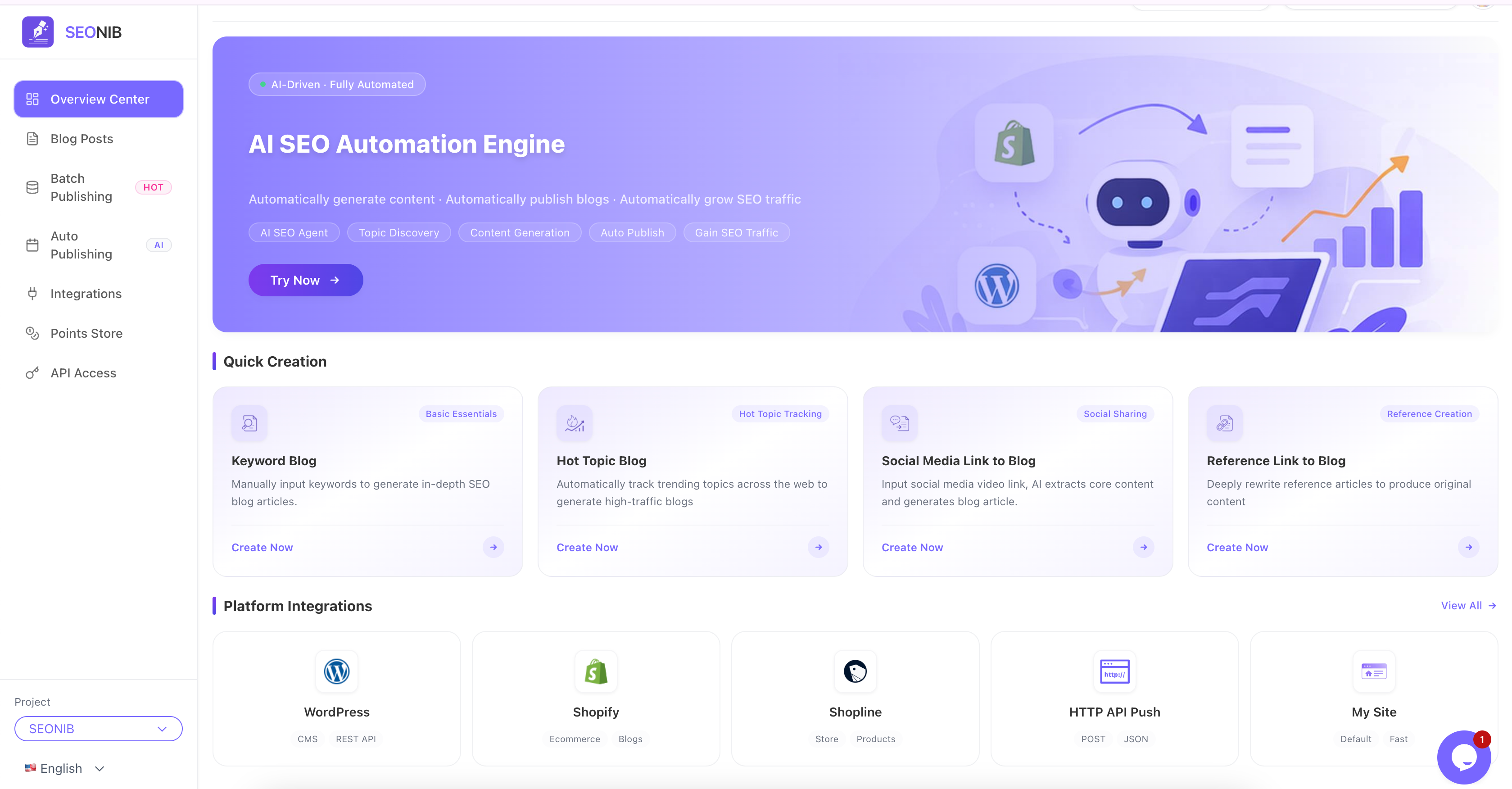

It was during this in-depth testing phase that SEONIB entered our view. What attracted us was not the power of any single feature, but the closed-loop logic it attempted to build: discovering content needs from multiple sources (keywords, PAA, tables), generating targeted, structured content, and then one-click publishing to a CMS (like our Shopify store), along with basic SEO element settings. It felt less like a tool and more like a pre-configured workflow automation agent. We decided to integrate it into our core process for a small-scale trial.

The Quality Paradox of Automated Content and Editorial Intervention

After integrating an automated pipeline like SEONIB, the first challenge we faced was not technical, but the paradox of quality versus scale. Fully automatically generated content was impeccable in grammar and structure, even with appropriate keyword density. But an experienced editor could immediately spot the issues: lack of unique perspectives, overly generic case studies, and superficial understanding of complex problems.

We were once disappointed by the bulk-generated content until we adjusted our strategy. We no longer pursued “completely unsupervised,” but positioned the automated process as a “super content assistant.” The specific approach was:

- Source Control: Strictly screen information sources. Instead of bulk importing broad keywords, we focused on extracting real user questions from product Q&As, customer service logs, and competitor reviews as “seeds” for content generation.

- Outline Review: The article outlines generated by the system had to be “intent-calibrated” by editors to ensure the article’s angle aligned with the brand tone and users’ actual pain points.

- Data and Case Study Injection: The framework of automatically generated content was manually injected with our unique business data, user case studies, or in-depth analysis paragraphs by editors.

In this way, SEONIB’s role shifted from “writer” to “efficient content framework builder and first draft writer,” saving editors over 70% of their basic work time and allowing them to focus on value-adding aspects – injecting insights and uniqueness.

The Hidden Bottleneck After Scaling: Indexing and Timeliness

As content began to be automatically published at a rate of dozens of articles per day, new bottlenecks quietly emerged: search engine indexing speed couldn’t keep up with the publishing speed. A large number of pages remained in the “discovered, not indexed” state for extended periods, becoming digital islands. Furthermore, for content generated based on hot topics, the time lag from discovering a trend to generating, publishing, and being indexed could mean the content was outdated by the time it was published.

Our coping strategy was layered management:

- Cornerstone Content: For evergreen topics, we didn’t pursue immediate indexing. Instead, we relied on the website’s overall authority and internal linking structure to allow it to be crawled naturally. We regularly used SEONIB’s bulk publishing feature to distribute these contents evenly, avoiding overwhelming the crawler.

- Timely Content: For trending topics, we configured higher publishing priorities and automatically pushed them to more prominent, higher-weighted channels on the website (e.g., the latest articles module on the homepage). Simultaneously, we integrated instant indexing APIs (like Google Indexing API) to actively push these critical URLs.

In this process, the ability of the automated pipeline to cooperate harmoniously with the website’s technical stack (e.g., CDN, caching strategies) and search engine interfaces became more important than content generation itself.

In 2026, How to View the Value of “Automated SEO Content”?

Looking back at this journey, my conclusion is: purely automated, unattended content generation focused on quantity has limited value, and can even be harmful, in the 2026 search ecosystem. However, using automation as the foundation of the core workflow, with human intelligence providing strategic guidance and calibration at key stages – a “human-machine collaboration” model – has become an inevitable choice for scaled content operations.

Its core value is no longer “replacing writers,” but:

- Extreme Efficiency Improvement: Liberating content creators from repetitive information gathering, structure building, and basic writing.

- Infinite Expansion of Coverage: Economically covering a vast number of long-tail search intents, which is impossible for humans to achieve.

- Process Standardization and Iteration: Solidifying successful SEO content models into automated processes, ensuring a stable baseline for output quality.

For teams looking to build content competitiveness in 2026, my advice is no longer “try these 30 tools,” but: first, clarify your content’s core value proposition and user decision journey, then find or build an automated system that can seamlessly embed your “value injection points” into the entire “discovery to publishing” workflow. Whether this system has a specific name is not important; what matters is whether its architecture is centered around “understanding and satisfying search intent” and can flexibly adapt to your unique business characteristics.

FAQ

Q1: In 2026, can content generated entirely by AI still achieve good Google rankings? A1: Google’s ranking core has always been the “match between content value and search intent.” Pure AI-generated content that is completely unoptimized, lacks unique information or experience, will find it increasingly difficult to rank. However, AI as a powerful auxiliary tool, generating high-quality content under the guidance of human expertise, has become mainstream. The key is whether the content truly solves the problem, not who wrote it.

Q2: Will automated content production lead to duplicate or low-quality website content, resulting in penalties? A2: If the strategy is to batch generate low-quality, repetitive content, the risk is extremely high. However, a reasonable automation strategy is to use unique data and business scenarios as information sources to generate differentiated content frameworks, which are then refined by humans. The focus should be on “incremental value,” not simply restating information already available online. Monitoring user experience metrics such as content bounce rate and dwell time is crucial.

Q3: For small teams or startups, where should they begin building content automation? A3: Don’t aim for full-stack automation from the start. Begin with the biggest pain point: for example, using tools to automatically convert product FAQs into blog post outlines, or extracting high-frequency questions from customer inquiries to generate content ideas. Choose a platform that can easily integrate with your existing CMS (like WordPress, Shopify), allows you to control content sources and review processes, and start with small-scale testing to validate traffic effects before gradually scaling up.

Q4: How effective is multilingual SEO content automation? What should be paid attention to? A4: For expanding into global markets, multilingual automation is a powerful tool, but the pitfalls are deep. Directly publishing machine-translated content yields poor results. It’s necessary to ensure the generation process is “localized creation,” meaning content is generated based on the search habits and cultural context of the target market, or at least thoroughly proofread by native speakers. Simple keyword translation can lead to intent deviation.

Q5: How to measure the ROI of automated content work? A5: Abandon the “article count” metric. Focus on core business metrics: how much qualified organic traffic (non-branded keywords) does the content generated by the automated process bring in? What is the conversion rate of this traffic (e.g., registrations, inquiries, purchases)? How many articles have entered the top 10 search results? Calculate the production cost of each effective piece of content (including tool costs and human calibration time) against its lifetime value.

分享本文