2026: How I Scaled SaaS Blog Production 10x with an Automated Pipeline

As I write this, I’ve just dealt with a “minor” incident. A product feature page for our Southeast Asian market sparked a small wave of discussion on social media due to a subtle deviation in a localized term within an automatically published blog post. This reminded me of three years ago, when we were struggling to consistently produce two English blog posts per week. The journey from that chaotic scramble to our current near-autonomous system managing a blog matrix spanning five languages and dozens of topics, with all the pitfalls and iterative thinking along the way, is perhaps more valuable than the final impressive “10x efficiency” figure.

From “What to Write” to “Where It Comes From”: A Paradigm Shift in Content Sourcing

Our early content production process was classic: the marketing department pitched ideas, the SEO team provided keywords, and then came the writing. Bottlenecks quickly emerged: the keyword pool was rapidly depleted, writers fell into repetitive labor, content became highly homogenized, and traffic growth quickly plateaued. We realized the problem wasn’t “how to write,” but “what to write.” High-quality, continuous content production must first solve the “source” problem.

We tried having writers scour industry reports, read competitor blogs, and even summarize YouTube videos. The result? Skyrocketing labor costs, unstable output, and inconsistent quality. A writer spending half a morning watching a video, taking notes, and then writing was inefficient and heavily reliant on individual ability. This was simply not a scalable SaaS approach.

The turning point came when we began systematically categorizing content sources and exploring possibilities for automation. We divided sources into four categories:

- Core Keywords: The foundation of SEO, but requiring outward expansion.

- Industry Trends & Hot Topics: Sourced from news aggregators, social media discussions, and specific forums, prioritizing timeliness.

- Video/Podcast Content: YouTube, industry conference recordings; high information density but time-consuming to distill.

- Competitor & Related Product Pages: Analyzing their phrasing, feature focus, and user reviews.

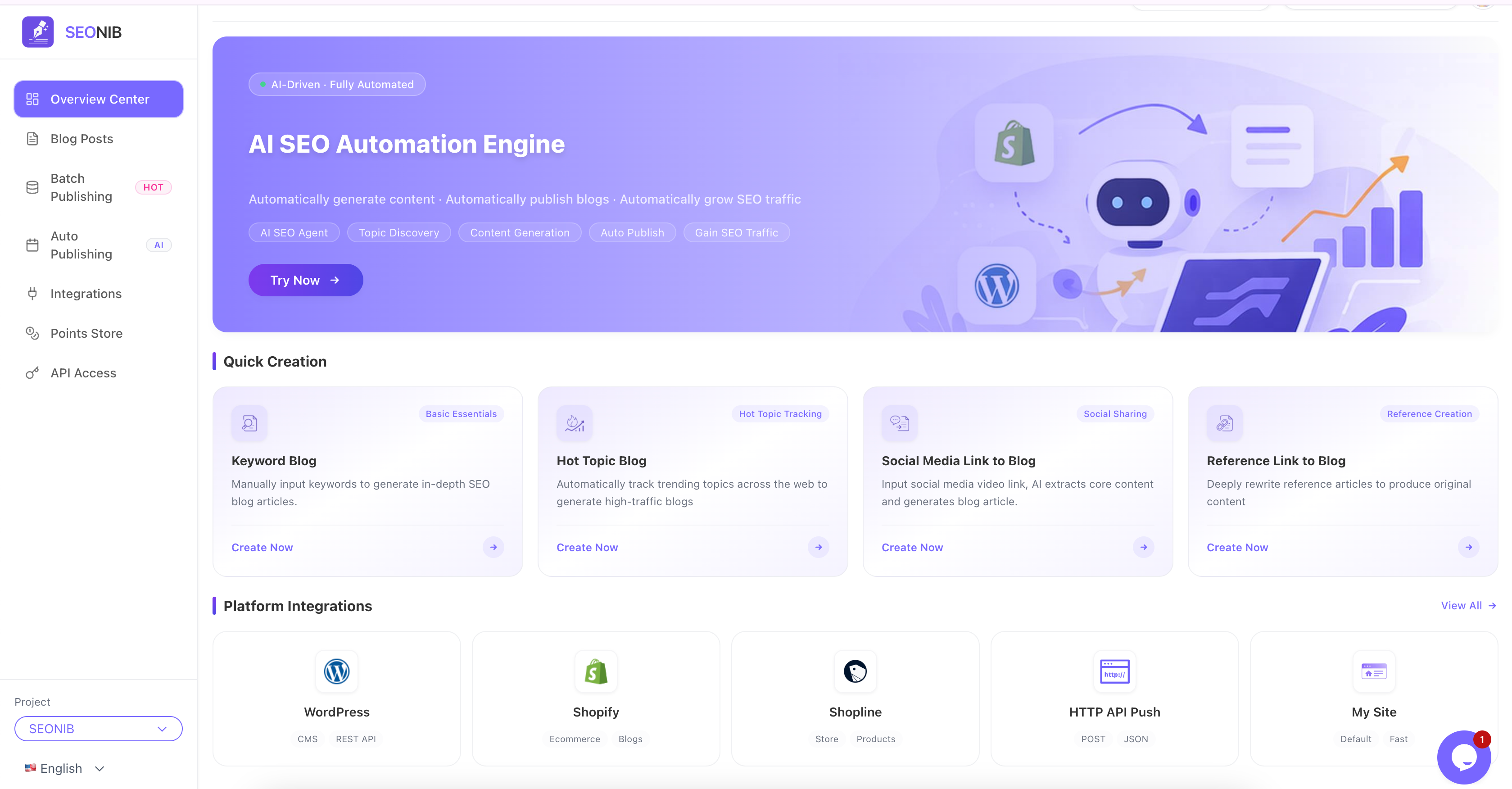

Manually processing these four types of sources was a disaster. We needed a “middleware” that could understand these different input formats, extract core themes, viewpoints, and facts, and then organize them into coherent articles. This is precisely where we introduced SEONIB. Initially, it was an experimental trial to have it parse a few competitor pages and YouTube links to generate drafts. To our surprise, it not only extracted key information but also identified the salient points from the spoken language in the original videos and reorganized them into clearly structured blog paragraphs. This showed us the possibility of automating the “information digestion” phase.

“Batch Generation” Isn’t Magic: Balancing Configuration, Quality, and Risk

Once the “source” problem was solved, we naturally moved to the next stage: batching. But while “batch generation” sounds appealing, the practical implementation is full of nuances. The biggest misconception is believing that “configure once, and it’s done forever.” In reality, the quality of batch generation depends entirely on the meticulousness of the initial configuration and an understanding of the generation logic.

Mistakes we made included:

- Topic Drift: Given a source about “CRM integration,” the AI might generate an article that delves deeply into “API design philosophy.” While related, the core topic was missed.

- Fact Rephrasing: The AI simply rephrased facts from the source content with different sentence structures, lacking in-depth analysis or added value, resulting in hollow content.

- Tone Mismatch: A blog generated from a rigorous technical white paper might be too academic; one generated from a casual podcast might be too informal.

Our counter-strategy was to establish “generation templates” and “quality checkpoints.” In SEONIB, we no longer just drop in a link. Instead, we configure:

- Core Instructions: Clearly specifying whether the article should be an “how-to guide,” “in-depth analysis,” “product comparison,” or “trend interpretation.”

- Style Anchors: Providing one or two of our approved past articles as references for tone and structure.

- Must-Include Points: Listing 3-5 key points that must be covered to ensure the topic isn’t deviated from.

- Exclusions: Explicitly stating competitor names, outdated terminology, or controversial statements we wish to avoid.

Before batch generation, we conduct small-scale tests with 3-5 different types of sources, manually review the output, and fine-tune the above configurations. This iterative process takes about one to two days, but once dialed in, it guarantees a baseline quality for subsequent batch generation of dozens of articles. The core of batching isn’t “non-intervention,” but “pre-intervention and templating.”

Deep Integration with CMS: The Final Automated Step from “Draft” to “Live”

Once content is generated, a bigger challenge awaits: publishing. We initially used WordPress, which required manually copying and pasting HTML, uploading featured images, setting categories and tags, SEO meta descriptions, and publication times. From finishing an article to making it live took another 15-20 minutes. For dozens of batch-generated articles, this was an unacceptable time sink.

We tried some general automation tools, but deep integrations with CMS like WordPress and Shopify were often fragile; a theme update or plugin conflict could disrupt the entire publishing process. What we needed was native, stable publishing capability that understood the CMS content model.

SEONIB provided a key solution here: instead of simulating front-end operations, it integrates through the CMS’s standard APIs (like WordPress’s REST API, Shopify’s Admin API). This means:

- High Stability: As long as the API remains unchanged, the publishing process is stable.

- Precise Field Mapping: It can accurately map titles, body text, images, tags, categories, SEO titles, and descriptions from the generated content to the corresponding fields in the CMS backend.

- Supports Scheduled Publishing: It can easily set up a publishing calendar for weeks or even months ahead, ensuring regular updates to the content matrix.

We set up the publishing process so that generated content first enters a “pending publication” queue. A designated person quickly reviews it (focusing on the introduction, conclusion, and any obvious errors). Once confirmed, it can be submitted to the publishing schedule with a single click. The system automatically handles image uploads, format conversions, URL settings, and all other minutiae. This integration truly transforms content from a “digital file” into an “online asset,” completing the automated loop.

Multilingual and GEO Optimization: Navigating a Fragmented Search Landscape

Our users are global, and focusing solely on English content means abandoning a significant portion of the market. However, traditional multilingual localization is extremely costly and time-consuming. While AI translation and generation are fast, our early “multilingual content” was merely text translation, lacking localized search optimization (GEO). Consequently, its performance in non-English search engines was mediocre.

This involves a crucial realization: SEO in 2026, especially for AI search (like Perplexity, Copilot) and localized search, must include GEO optimization elements. This means naturally incorporating commonly used local search queries, regional case studies, applicable regulations, or cultural references into the content.

SEONIB’s “SEO + GEO Dual Optimization” feature proved invaluable in this scenario. It not only translates content into multilingual versions but also adjusts keyword localization for the target language region and inserts contextually relevant phrases that align with regional search habits. For example, an article about “data compliance” generated in German would focus on GDPR and German local regulatory bodies, while the Japanese version would link to PIPA and common practices for Japanese companies.

The results have been significant. The ranking speed of some of our non-English blog pages on local Google or Bing has been faster than our original English content on Google.com. This is the power of combining “targeted generation” with “regional optimization.”

Reflection: What is the Role of Humans After Efficiency Gains?

With a 10x increase in blog production efficiency, is the team out of a job? Quite the opposite. The role of humans has fundamentally shifted:

- From “Author” to “Curator and Trainer”: Our energy is no longer spent on writing, but on finding and verifying high-quality content sources, designing smarter generation prompts, and continuously “training” and optimizing our automated pipeline.

- From “Executor” to “Strategist and Risk Manager”: We need to formulate content strategies for different markets and product lines, and establish final quality control lines and public opinion monitoring mechanisms (like the incident at the beginning of this article, which prompted us to strengthen pre-publication review of culturally sensitive terms).

- From “Content Producer” to “Performance Analyst”: We have more time to analyze the differences in traffic, conversions, and user engagement brought about by different content sources, generation templates, and publishing strategies, using data to drive the next iteration.

Automation hasn’t replaced us; it has liberated us from repetitive labor, allowing us to focus on tasks that require more judgment, creativity, and strategic thinking. This automated pipeline, with SEONIB at its core, has become the infrastructure for our content operations. It’s not perfect and requires maintenance and fine-tuning, but the stable output and scalability it provides are the foundation for our small team managing a global content matrix.

FAQ

Q1: Will search engines like Google penalize fully auto-generated content? A: This is an old question from 2023. The key is “quality,” not “whether it was generated by AI.” If the generated content is low-quality, meaningless, or pure keyword stuffing, it will be penalized whether written by a human or an AI. Our experience is that as long as the content provides real value, is factually accurate, well-structured, and offers a good user experience, search engines will recognize it. Our automated process includes human review and prompt optimization to ensure content quality meets standards.

Q2: How do you ensure content generated from videos or competitor pages doesn’t constitute infringement? A: This is an important legal and ethical question. Our principle is “draw inspiration, not copy.” The automation tools extract core viewpoints, facts, and logical frameworks, not verbatim replication. The generated articles are reorganized in our own language and supplemented with our independent analysis and insights. We also have checks in place to avoid large direct quotes (unless authorized). Essentially, it’s the same as a human researcher writing a review article after reading multiple sources.

Q3: For multilingual generated content, can local users tell it’s AI-written? Is the language idiomatic? A: Early versions did have a “translationese” problem. However, advanced models now perform very well in localization, especially when combined with GEO optimization. In our process, for key markets (like Japan, Germany, Spain), native speakers conduct sample reviews, provide feedback, and help optimize generation prompts. The final result is that most users don’t realize it’s AI-generated, especially for informational and technical blogs. Of course, achieving the literary quality of top native writers is still not possible, but for business and technology content, it’s more than sufficient.

Q4: Is the initial setup and ongoing maintenance cost of this automated process high? A: Initial setup requires time investment (about 1-2 weeks) for testing different content sources, debugging generation templates, and integrating with the CMS. This is the main “one-time cost.” Ongoing maintenance costs are very low, mainly involving periodic checks of publishing logs, fine-tuning strategies based on content performance data, and testing integration stability when the CMS has major updates. Compared to the labor costs of a traditional full-time writing team, this investment is extremely low and offers excellent scalability – managing 10 blogs and 100 blogs has a very small marginal cost increase.

Q5: How does auto-generated content perform in terms of user interaction (comments, shares)? A: This depends on the intrinsic value of the content itself. We’ve found that articles generated from in-depth industry reports or popular videos, with high information density and clear viewpoints, have user engagement rates (reading time, shares) comparable to high-quality human-written articles. The key lies in the topic selection and the quality of the source. Automation hasn’t lowered the standard of value that content needs to possess; it has merely changed the production method of delivering that value. Articles with low engagement rates are often those with mediocre topics or crude generation prompts, which in turn prompts us to optimize the upstream “sourcing”环节.