SEONIB FAQs and Advanced Techniques: Making AI Writing Google-Compliant

In the content operations battlefield of 2026, we rarely discuss “whether AI can write,” but rather focus on “whether AI-written content can be truly accepted by search engines.” Many teams confidently deployed automated content systems, only to find themselves confused months later: articles are fluent and complete, but traffic remains stagnant. The problem isn’t with AI itself, but with our understanding of Google’s “reading habits” in the new era.

The Initial Misconception: Equating “Compliance” with “Keyword Stuffing”

In the early days, many operators simplified SEO rules into a set of mechanical instructions: keyword density, title structure, meta description length. They instructed AI to strictly adhere to these numerical metrics, producing a batch of technically “perfect” articles. The result? Smooth indexing, but stagnant rankings. We had a project where we generated 50 meticulously structured articles for a niche tool, and within three months, only a few long-tail keywords brought in a handful of visits. Checking the logs, Google’s crawlers visited frequently, but the pages consistently hovered on the second or third page.

This made us realize that Google’s algorithms, especially the systems that have evolved over years of battling AI content, have long surpassed superficial feature matching. They began to evaluate content’s “intent satisfaction” and “information evolution trajectory.” An article driven purely by keywords, even if formatted correctly, has a static, stacked information flow, lacking the natural focus shifts and contextual building that human writers employ.

The Turning Point: Introducing Intent Mapping and Dynamic Optimization

The change occurred after we incorporated the “user search journey” into the core of our generation process. Instead of just inputting a list of keywords, we began constructing “question clusters” and “intent scenarios.” For example, for “beginner crochet,” we no longer just generated one tutorial. We had the system associate a series of real, sequential follow-up questions like: “How to choose your first crochet hook set,” “Common reasons for first project failure,” “How to read crochet pattern symbols.”

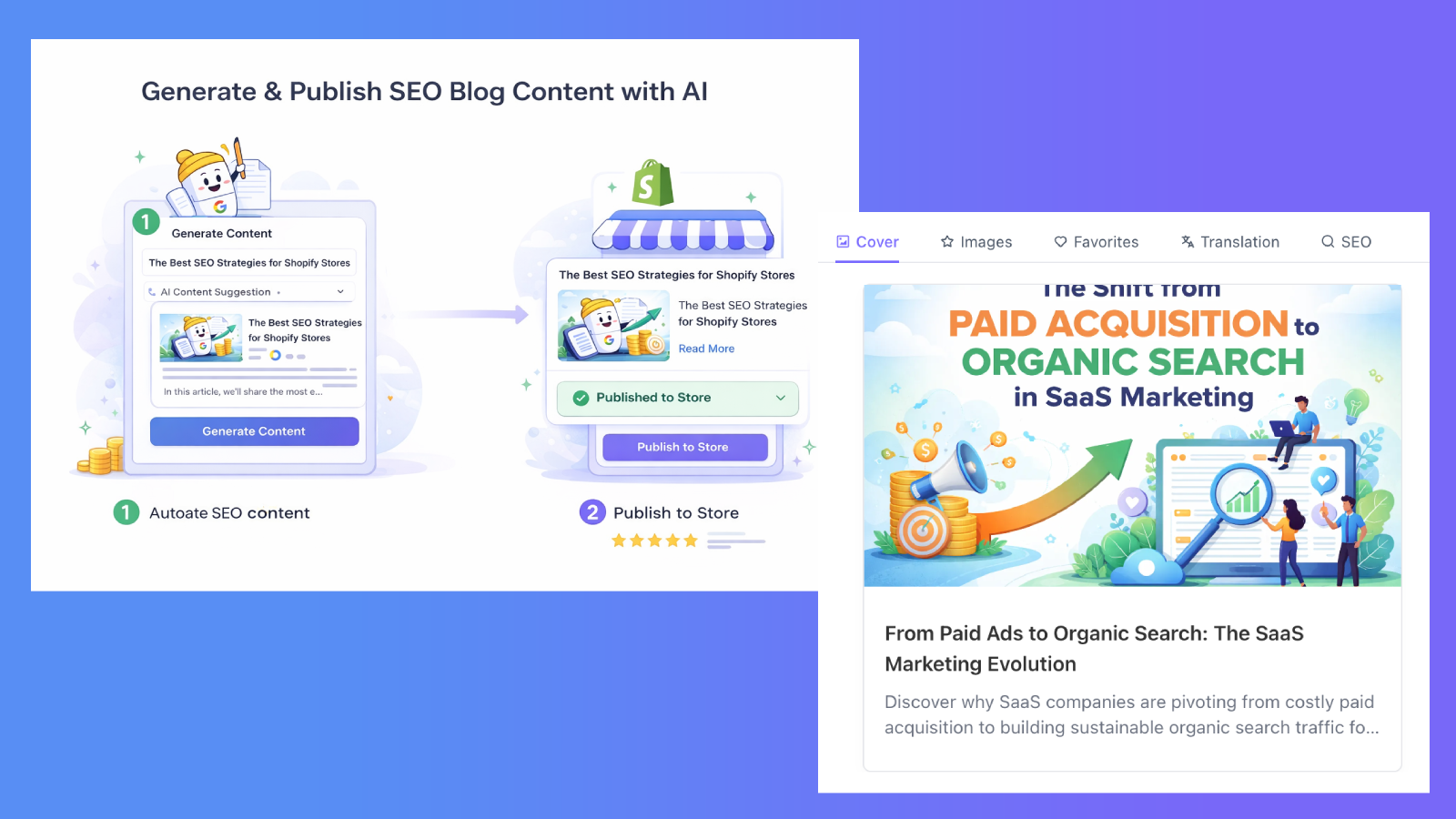

It was then that we started using SEONIB to assist this process. Its value lies not in replacing our thinking, but in providing a framework that automatically clusters scattered search data (like PAA questions, related search terms) into a logically hierarchical topic tree. The first content package we generated included a complete chain from “absolute beginner questions” to “advanced needs after the first project.” After publishing, we observed no significant change in indexing speed, but internal linking weight and dwell time data for the pages began to improve. Google seemed more inclined to treat this series of pages as a “knowledge unit” rather than isolated information points.

Advanced Techniques: Controlling Information “Freshness” and “Deep Iteration”

Another easily overlooked trap is “one-time generation.” AI can instantly produce an article covering all aspects, but this sometimes goes against the process of real knowledge accumulation. We found that for certain complex topics (like “advanced amigurumi crochet techniques”), generating a single ultimate guide was less effective than producing a basic introduction and then, over the following weeks, automatically adding more in-depth “update articles” or “FAQ articles” based on actual search feedback and community discussions.

SEONIB’s continuous operation model played a role here. We configured the system to continuously monitor search trends for related long-tail keywords and emerging PAA questions after publishing the core article. It automatically plans and generates supplementary content, released as updates to the original article or as new installments in a series. This simulates the process of a real blog deepening its topics over time. Search engines show a clear preference for this “progressive content expansion,” as it more closely resembles an active expert continuously contributing knowledge.

Compliant “Tone” and “Uncertainty”

The “mechanical feel” most easily identified in AI-generated writing often stems from overly absolute conclusions and narratives without any twists. We began intentionally incorporating ambiguous prompts into our generation instructions. For example, when comparing two products, we would ask the AI to point out that “in certain specific scenarios, A might be more suitable, but if you value B’s features, then a certain version of model C might be the hidden winner.” Such conditional and subtly uncertain statements greatly enhance content credibility. It’s no longer a product manual, but a review that includes personal experience and balanced consideration.

Operationally, this requires meticulous prompt design and the injection of rich reference material. You can’t just throw an AI a product link and a few keywords. You need to provide snippets of real user reviews, points of contention from forums, or even contradictory performance test data. By having the AI sift through and judge these “raw materials,” the resulting content will possess that valuable, uniquely human “analytical trace.”

Pitfalls and Opportunities in Multilingual Content

When targeting global markets, multilingual generation appears to be a significant advantage, but it also brings new compliance challenges. Directly translating English core articles into Chinese or Japanese often leads to misalignments in cultural context and search intent. “Crochet” in English communities and Chinese communities have vastly different popular projects, tool preferences, and learning difficulties. We once experienced Japanese pages with good indexing but almost zero clicks due to direct translation.

The solution is “intent localization.” We use the system to analyze search trend data and popular questions from different language regions, generating independent content trees for each market, even if the core topic is the same. For instance, English content might focus more on “quickly completing creative gifts,” while Chinese content delves deeper into “skill refinement and work display.” This requires backend support for robust multilingual data sources, and generation strategies cannot be uniform; they must be configured per market.

Observations and Adjustments in Continuous Operation

Once the automated system is set up, the real operation has just begun. We’ve developed a habit of reviewing “content performance reports” weekly, but we don’t focus on simple traffic numbers. We pay more attention to:

- Which articles attract a high percentage of “related search” clicks? (This indicates the content successfully linked to users’ derivative intent.)

- Which articles have unusually low bounce rates and lead to multi-page views within the site? (This indicates the content has built an effective knowledge guidance path.)

- Which newly published content, despite low initial traffic, quickly gains internal recommendation traffic from earlier articles? (This indicates the content ecosystem is beginning to form.)

These metrics help us continuously fine-tune our generation strategies: strengthening coverage of certain intent clusters, cutting down on overly broad but ineffective topic branches, or even adjusting the narrative structure of article openings (e.g., shifting from directly answering questions to first recounting a common misconception).

Making AI writing compliant with Google’s rules in 2026 is no longer a technical configuration issue, but a matter of content strategy and cognitive framework. Tools (like SEONIB) provide efficient execution and data integration capabilities, but the core “intent map” and “knowledge evolution model” still require operators to draw them based on a deep understanding of real users and the search ecosystem. The mark of success is not whether an article is indexed, but whether your AI-generated content network begins to grow and connect naturally within the search engine’s knowledge graph, like a living organism, continuously attracting visitors with genuine questions.

FAQ

Q: Can AI-generated content truly be recognized and fairly ranked by Google? A: Yes, but only if the content effectively satisfies search intent, rather than just matching keywords. We’ve observed that articles that mimic high-quality human-written content in their information structure, question coverage, and narrative logic perform no differently in rankings than human-written articles. Google’s algorithms focus on evaluating content value, not tracing the creation method.

Q: How can I prevent AI content from sounding monotonous and lacking personality? A: Inject “conflicting data” and “scenario-based trade-offs.” Don’t just provide standard product information. Offer user complaints, gray areas in performance comparisons, and reversals of advantages under specific conditions. Let the AI analyze and judge these complex materials, and the resulting content will naturally carry unique points of view.

Q: How should the automated generation frequency be set? Will publishing one article per day be considered spam? A: Frequency itself is not the issue; the problem lies in content relevance and incremental value. Publishing isolated, thematically scattered articles daily carries high risk. A better model is: each week, focus on 1-2 core topics and generate a main article along with several in-depth supplementary, FAQ, or scenario expansion articles. This forms a natural content cluster, making it easier for search engines to recognize it as a valuable vertical resource.

Q: Do I need to manually review every AI-generated article? A: Strongly recommended initially. The purpose of manual review is not to edit text, but to assess the “completeness of intent coverage” and “logical fluency” of the content. After a few weeks, once the generation strategy is stable and content performance data is good, you can transition to sample reviews and strategy adjustments based on data metrics. Letting it run completely unchecked can easily lead to inefficient content branches in unknown directions.

分享本文